In this article, we will share several ideas on how to download files with Playwright. Automating file downloads can sometimes be confusing. You need to handle a download location, download multiple files simultaneously, support streaming, and even more. Unfortunately, not all the cases are well documented. Let's go through several examples and take a deep dive into Playwright's APIs used for file download.

This guide is a part of the series on web scraping and file downloading with different web drivers and programming languages. Check out the other articles in the series:

- How to download a file with Selenium in Python?

- How to download a file with Puppeteer?

- How to download a file with Playwright?

Downloading a file after the button click

The pretty typical case of a file download from the website is leading by the button click. By the fast Google'ing of the sample files storages I've found the following resource: https://file-examples.com/

Let's use it for further code snippets.

Our goal is to go through the standard user's path while the file download: select the appropriate button, click it and wait for the file download. Usually, those files are download to the default specified path. Still, it might be complicated to use while dealing with cloud-based browsers or Docker images, so we need a way to intercept such behavior with our code and take control over the download.

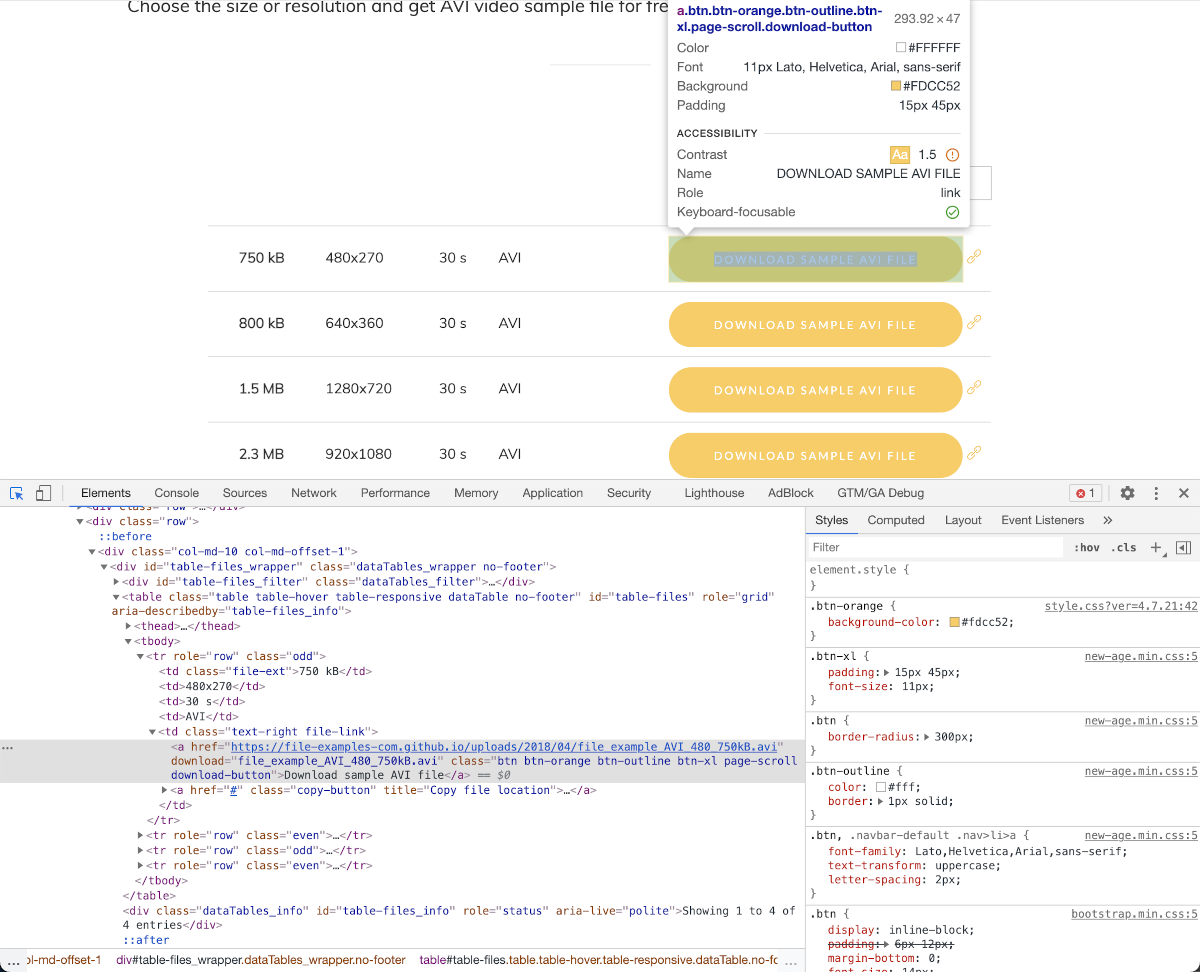

To click a particular button on the web page, we must distinguish it by the CSS selector. Our desired control has a CSS class selector .btn.btn-orange.btn-outline.btn-xl.page-scroll.download-button or simplified one .download-button:

Let's download the file with the following snippet and check out a path of the downloaded file:

const playwright = require('playwright');

const pageWithFiles = 'https://file-examples.com/index.php/sample-video-files/sample-avi-files-download/';

(async () => {

const browser = await playwright['chromium'].launch();

const context = await browser.newContext({ acceptDownloads: true });

const page = await context.newPage();

await page.goto(pageWithFiles);

const [ download ] = await Promise.all([

page.waitForEvent('download'), // wait for download to start

page.click('.download-button')

]);

// wait for download to complete

const path = await download.path();

console.log(path);

await browser.close();

})();

This code snippet shows us the ability to handle file download by receiving the Download object that is emitted by page.on('download') event.

Browser context must be created with the acceptDownloads set to true when user needs access to the downloaded content. If acceptDownloads is not set, download events are emitted, but the actual download is not performed and user has no access to the downloaded files.

After executing this snippet, you'll get the path that is probably located somewhere in the temporary folders of the OS.

For my case with macOS, it looks like the following:

/var/folders/3s/dnx_jvb501b84yzj6qvzgp_w0000gp/T/playwright_downloads-wGriXd/87c96e25-5077-47bc-a2d0-3eacb7e95efa

Let's define something more reliable and practical by using saveAs method of the download object. It's safe to use this method until the complete download of the file.

const playwright = require('playwright');

const pageWithFiles = 'https://file-examples.com/index.php/sample-video-files/sample-avi-files-download/';

const reliablePath = 'my-file.avi';

(async () => {

const browser = await playwright['chromium'].launch();

const context = await browser.newContext({ acceptDownloads: true });

const page = await context.newPage();

await page.goto(pageWithFiles);

const [ download ] = await Promise.all([

page.waitForEvent('download'), // wait for download to start

page.click('.download-button')

]);

// save into the desired path

await download.saveAs(reliablePath);

// wait for the download and delete the temporary file

await download.delete()

await browser.close();

})();

Awesome!

The file will be downloaded to the root of the project with the filename my-file.avi and we don't have to be worried about copying it from the temporary folder.

But can we simplify it somehow? Of course. Let's download it directly!

Direct file download

You've probably mentioned that the button we're clicked at the previous code snippet already has a direct download link:

<a href="https://file-examples-com.github.io/uploads/2018/04/file_example_AVI_480_750kB.avi" download="file_example_AVI_480_750kB.avi" class="btn btn-orange btn-outline btn-xl page-scroll download-button">Download sample AVI file</a>

So we can use the href value of this button to make a direct download instead of using Playwright's click simulation.

To make a direct download, we'll use two native NodeJS modules, fs and https, to interact with a filesystem and file download.

Also, we're going to use page.$eval function to get our desired element.

const playwright = require('playwright');

const https = require('https');

const fs = require('fs');

const pageWithFiles = 'https://file-examples.com/index.php/sample-video-files/sample-avi-files-download/';

const reliablePath = 'my-file.avi';

(async () => {

const browser = await playwright['chromium'].launch();

const context = await browser.newContext({ acceptDownloads: true });

const page = await context.newPage();

await page.goto(pageWithFiles);

const file = fs.createWriteStream(reliablePath);

const href = await page.$eval('.download-button', el => el.href);

https.get(href, function(response) {

response.pipe(file);

});

await browser.close();

})();

The main advantage of this method is that it is faster and simple than the Playwright's one. Also, it simplifies the whole flow and decouples the data extraction part from the data download. Such decoupling makes available decreasing proxy costs, too, as it allows to avoid using proxy while data download (when the CAPTCHA or Cloudflare check already passed).

Downloading multiple files in parallel

While preparing this article, I've found several similar resources that claim single-threaded problems while the multiple files download.

NodeJS indeed uses a single-threaded architecture, but it doesn't mean that we have to spawn several processes/threads in order to download several files in parallel.

All the I/O processing in the NodeJS is asynchronous (when you're making the invocation correctly), so you haven't to worry about parallel programming while downloading several files.

Let's extend the previous code snippet to download all the files from the pages in parallel. Also, we'll log the events of the file download start/end to ensure that the downloading is processing in parallel.

const playwright = require('playwright');

const https = require('https');

const fs = require('fs');

const pageWithFiles = 'https://file-examples.com/index.php/sample-video-files/sample-avi-files-download/';

const reliablePath = 'my-file.avi';

(async () => {

const browser = await playwright['chromium'].launch();

const context = await browser.newContext({ acceptDownloads: true });

const page = await context.newPage();

await page.goto(pageWithFiles);

const hrefs = await page.$$eval('.download-button', els => els.map(el => el.href));

hrefs.forEach((href, index) => {

const filePath = `${reliablePath}-${index}`;

const file = fs.createWriteStream(filePath);

file.on('pipe', (src) => console.log(`${filePath} started`));

file.on('finish', (src) => console.log(`${filePath} downloaded`));

https.get(href, function(response) {

response.pipe(file);

});

});

await browser.close();

})();

As expected, the output will be similar to the following:

my-file.avi-0 started

my-file.avi-1 started

my-file.avi-3 started

my-file.avi-2 started

my-file.avi-0 downloaded

my-file.avi-1 downloaded

my-file.avi-2 downloaded

my-file.avi-3 downloaded

Voilà! The NodeJS itself handles all the I/O concurrency.

Conclusion

Downloading a file using Playwright is smooth and a simple operation, especially with a straightforward and reliable API. Hopefully, my explanation will help you make your data extraction more effortless, and you'll be able to extend your web scraper with file downloading functionality.

I'd suggest further reading for the better Playwright API understanding:

- Playwright's Download

- How to download a file with Javascript

- How to use a proxy in Playwright

- Web browser automation with Python and Playwright

Happy web scraping, and don't forget to change the fingerprint of your browser 🕵️